Detecting and Tracking a Trailer Tongue

Introduction

The goal of this project was to develop an algorithm that can identify the trailer tongue on a trailer based on 3D point cloud data. The trailer tongue is the part of the trailer that often has a stick-like shape, and extends out in the front of a trailer; its tip can be hitched to a trailer hitch. When a driver would like to hitch a trailer, they can use the algorithm I developed to locate the trailer tongue without having to get down from the car. The driver can then continuously track the relative position of the trailer tongue while backing close to the trailer, which would allow them to easily park the trailer hitch to the tip of the trailer. In my project, I was able to visualize the trailer tongue detected and report the distance of the tip of the tongue from a trailer hitch in a 3D file format.

Ground Recognition and Alignment

First, I used RANSAC to perform ground detection, then I removed the ground points. Using the equation for the ground plane, I also transformed the point cloud such that it is aligned with Z=0 being the ground.

Trailer Tongue Recognition

For a trailer with a flat surface

I first assumed that there is only the trailer in the point cloud and that it is a trailer with a flat back, like a U-haul cargo trailer.

With this assumption in mind, I clustered the point cloud to remove outliers, then I found the front plane using RANSAC, and then assumed that everything in front of the plane would be the trailer tongue. In the image below the yellow points are from the trailer, the blue is the trailer tongue identified, the red is the "tip" of the tongue and the green lines form a bounding box for it.

Of course, these assumptions do not apply very often in real life since there are many types of trailers and most likely there would be other objects close to the trailer, hence I needed to develop a more robust algorithm

Initial Recognition

Clustering

I used DBSCAN as a clustering method. Clustering is necessary to remove outliers as well as to separate different objects when there are multiple in view. With the version of DBSCAN that I used, I can specify a parameter which is the euclidean distance for what could be considered "within cluster". This parameter has been, in my algorithm carefully adjusted based on the approximate distance of the trailer from the sensor. If the object were further, this parameter is larger since there would be fewer points but we still want to cluster the object as a whole. If the object is really close, the parameter needs to be smaller, otherwise, with a lot of points, DBSCAN would take a very long time if the algorithm also has to search for neighbors within a large radius. Hence I have specified DBSCAN parameters for different ranges of how far the trailer is from the sensor.

Object tracking

After clustering, I am also tracking the object which is identified as the trailer so that other vehicles in view don't affect the tracking results. I do this by trying to see which object is most likely to be the trailer(using a loss function explained later), then continuously tracking the mean of that cluster. So in future frames, I would filter out all other clusters and only keep the only with the mean closest to the mean that I am continuously tracking to ensure I am just working with the cluster that is the trailer.

Loss function

To be able to find the trailer tongue, I am using the optimize. minimize function from SciPy, it is used to find the minimum value of a function. I aggregated a lot of point clouds scanned in the first few seconds to increase accuracy for detection. To be able to use my loss function, I first stored a 3d point cloud model of the trailer tongue. Then the loss function's goal is to find the part of the point cloud that had the "best fit" with the model.

In mathematical terms, my loss function had 5 parameters: alpha which is the angle of rotation up and down, beta which is the angle of rotation left and right, and shift in the x, y, and z direction. The output would be, after I rotate the model by alpha and beta, then shift it by x, y, and z, (in each of the respective directions), return the mean distance of each point in the model to its closest two points in the point cloud of the entire trailer. Then, theoretically, this would return the smallest value when the model is the best fit with the point cloud, allowing the algorithm to locate where the trailer tongue is within the point cloud. I also added a penalty term that will add to the loss function if there are too many points in front of the model once it is been placed. The trailer tongue must be in the front of the trailer cluster hence adding the penalty term helps eliminate some cases when a shape similar to the trailer tongue is somewhere else in the point cloud. After finding the optimal position to place the model, I extracted the points closest to the positioned model in the point cloud and collected and stored those points as the trailer tongue, which I will need to keep track of in future frames.

Tracking after Initial Recognition

After the initial detection, I had to continuously track the position of the tongue. One way I thought of doing so was using iterative closest point (ICP) which was an algorithm that can match two point clouds and find the transformation that can change one to the other. I had several attempts at this as well.

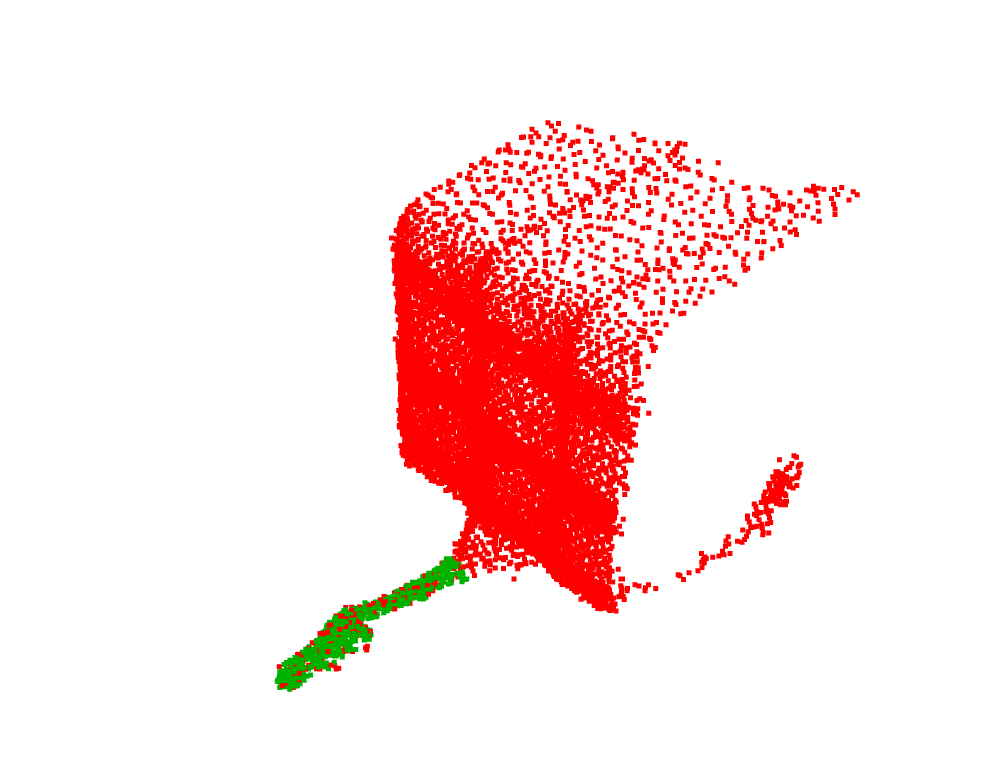

Attempt 1: ICP for each adjacent frame

Here I used ICP to calculate the transformation from the trailer in the previous frame to the trailer in the next frame. Then I continuously applied this transformation to the trailer tongue from the previous frame. My idea was that if the trailer had "moved" by this much according to ICP, then the tongue would have moved by the same amount so this should allow me to track it. But this led to some issues in the results produced. Sometimes there may be a small error each time I use ICP. Then continuously applying these transformations to the trailer tongue stored will lead to more and more errors adding up. This can cause the trailer tongue to be significantly off from where it should be in the first place. As visible in the video below, the green (the trailer tongue identified) is slowly drifting away from where it should be matched with the trailer.

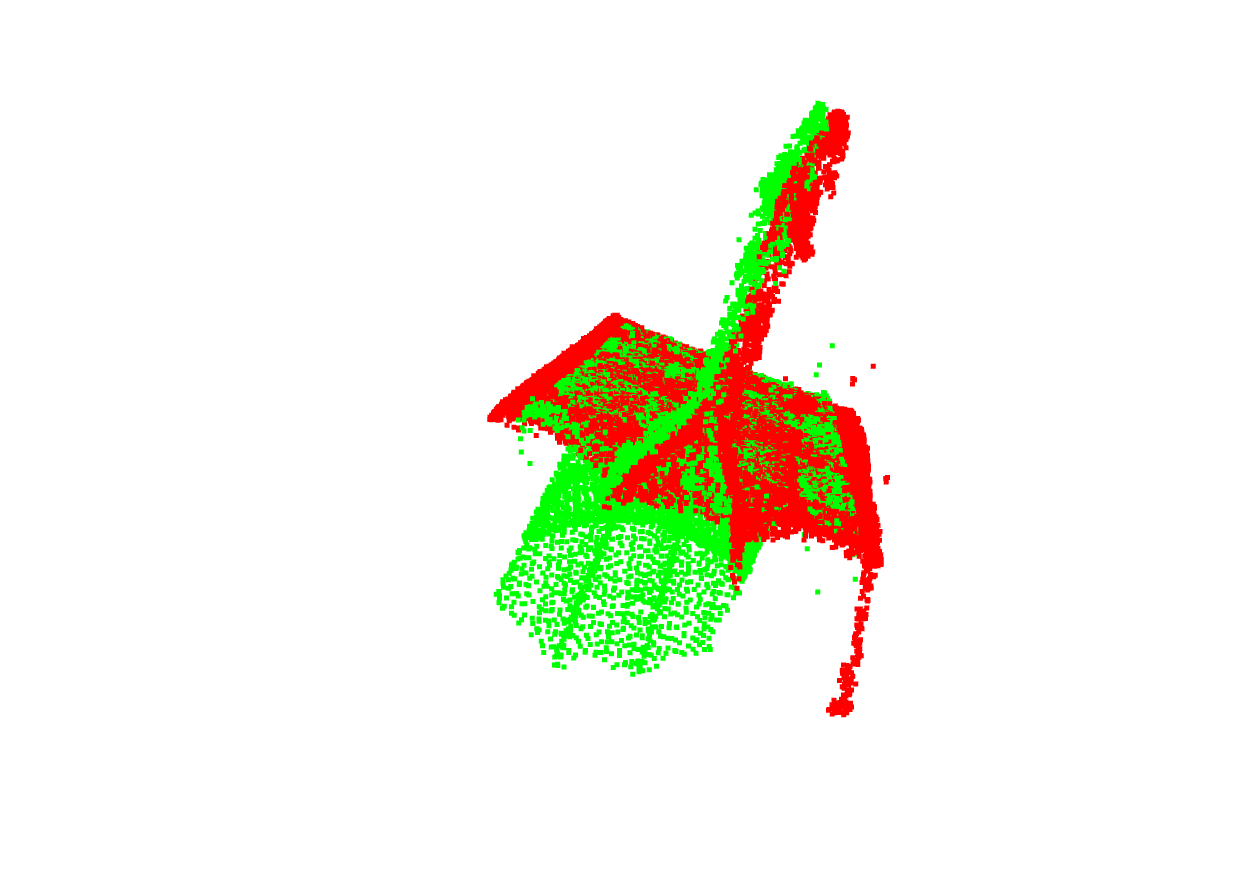

Attempt 2: ICP from the first frame to each frame

In this version, I kept track of a "source frame", this was the first frame but for each next frame, I found the transformation from the "source frame" to the next frame and applied this transformation for the "source frame". Then I also apply this transformation to the trailer tongue from the previous frame. This resolves an issue from Attempt 1 because now, an error in the ICP can be corrected in the future because this error was also applied to the "source frame", so in a future time when ICP is used to find a transformation from the source frame to a current frame, it will take into account the error earlier and adjust accordingly to that. However, there is another issue with this which is that the points for the "source frame" are points from the first frame. The trailer as scanned in the first frame may be very different from the trailer scanned in a much later frame. The image below is a bottom view of how well the source and the current frame match. The backplane for the two point clouds (in different colors) is matched well by ICP but the front tongue part is not being matched together.

Solution: ICP for each adjacent frame + local adjustment

I realized from Attempt 2 that I couldn't try to match points from the first to a much later frame. Hence I decided to use the idea of using ICP from the previous frame to the next, but also with local adjustments. I used a similar loss function as what I used for initial detection, but made it to search within a smaller range for the different variables. This resolved the issue that the ICP errors build up because it gets adjusted in each frame. I also added another penalty term that ensures that the tongue is not too much in front of where it should be, by increasing the loss function when there are many points in the trailer tongue detected that are in front of the trailer point cloud. This algorithm worked quite well in tracking the trailer tongue.

Kalman filtering

I also kept track of the tip of the trailer tongue, which was calculated each frame based on how much the trailer tongue moved. But in order to make it less sensitive to inaccuracies or outliers, I applied a Kalman filter to it on the velocity of its movement. This allowed the hitch tip position to move in a more smooth way.

Visualization

To visualize the results, I added the points which are the trailer identified and the trailer tongue identified in a different color. I also added some text that shows the distance of the trailer tip from the truck. There are also lines illustrating the distance and direction from the truck - which turn from green to red once the trailer is within a very close distance to the truck

I was also able to add in 3D models of both the trailer and the truck to make the results more easily interpreted. The location of the trailer was determined by the position of the tip, and its angle is also aligned with the angle of the trailer tongue.

Results

Here is a video showing the algorithm and visualization working together. The trailer tongue can be tracked quite accurately even when the trailer is parked closely next to other vehicles!

Limitations and Edge cases

Distance from the truck

The trailer should be approximately within 8 meters away from the sensor for the algorithm to work, otherwise, there may be too few points for the algorithm to be able to detect the trailer tongue properly.

Distance from other vehicles

If other vehicles or objects are very close to the trailer (< 0.4m) there is a chance they get clustered together with the trailer and this may cause issues in the initial detection or ICP tracking algorithm